Inside the 2024 Precinct Project

Collecting, Cleaning, and Validating Our Nationwide Dataset of Precinct Returns

The 2024 release of our precinct-level election returns dataset is the culmination of a multi-year effort by a team of over a dozen research staff, graduates, and undergraduates to provide a nationwide dataset of general election returns for local, state, and federal offices.

Our data provide vote counts for more than 54,000 candidates running for over 12,000 offices across 200,000 precincts; our most extensive analysis of precinct-level data so far. The MIT Election Data and Science Lab (MEDSL) first produced precinct-level data for the 2018 election and has improved its coverage and data quality in subsequent elections. For this release, we’ve provided a revamped codebook and improved our quality assurance process in pursuit of making this our most extensive, accurate, and accessible release yet. Here’s how we did it.

Get the data

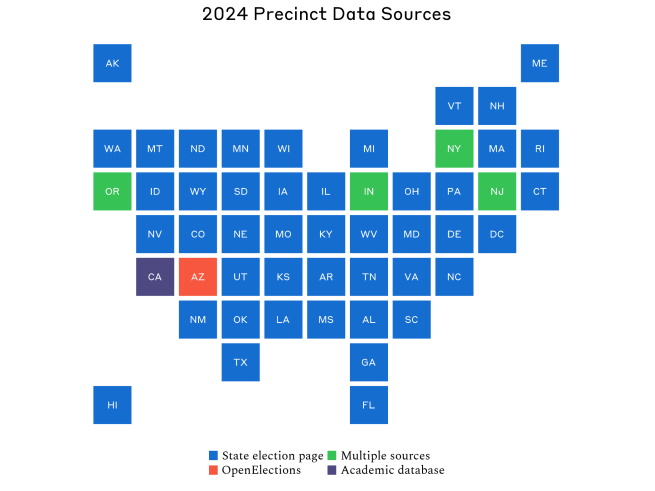

American federalism offers some advantages—laboratories of democracy, states checking federal power, and so on—but clean and easy-to-access election data isn’t one of them. The decentralized nature of US election administration, while making our system more resilient, yields election return data varying wildly in accessibility, format, quality, and completeness both between and within states. Some states, like California, have centralized repositories making data collection a relative breeze, while others leave it to counties to individually publish election return data.

Our first stop when gathering a state’s data is the state election authority’s website. In the best case, we find the data we need here, ideally as spreadsheets, less ideally as documents, or even less ideally as a dashboard that needs to be scraped or manually extracted from. In some cases, the data are removed or overwritten following the election but can be recovered using the Wayback Machine or clever URL guessing.

In the worst case, when precinct-level data are not provided at the state level, we turn to county election authorities’ websites to perform data collection county by county. With the average state containing around 60 counties, it can be incredibly time-consuming when even a few states require this treatment. If, after this, data still cannot be found for a county, we have two options. The first is drawing on other election data providers, most often OpenElections. We generally avoid doing so unless absolutely necessary because we see value in our data being independently collected so it can be validated against other sources. The second is reaching out directly to local election offices when all else fails. We leave this as a last resort so as not to burden election officials who already have enough on their plates, especially in an election year.

Data collection is the first part of our endeavor where advances in multimodal large language models (LLMs) have now come into our workflow. Since we started collecting precinct data, manually searching for and collecting the raw data has often been more efficient than writing scrapers or using browser automation tools like Selenium or Playwright, especially as firewalls, CAPTCHAs, and other methods to prevent web crawling are now widespread. It will come as no surprise to those following the cybersecurity space that LLMs find workarounds with relative ease. Sometimes this manifests with an AI writing sophisticated scraping scripts, other times it’s as blunt as screenshotting a webpage and extracting text from it. More recently, AI providers have rolled out computer and browser control features that allow agents to navigate your computer’s screen as a human would.

Existing browser automation tools can accomplish similar tasks but are intentionally brittle with a fairly steep learning curve as they are intended for developers to test their software. AI browser control tools grant the ability to direct an agent to collect data from an arbitrary number of webpages with a single prompt and no real setup. Of course, there is no free lunch—agents have endless opportunities to misbehave, doing anything from hallucinating data to falling victim to malicious prompt-injection attacks. Extreme caution has to be taken to use these tools safely and effectively.

Clean the data

Getting the raw data from states is, at most, a fifth of the battle. The bulk of our effort goes to parsing and standardizing those raw data with the overarching goal of accurately preserving the information it contains whenever possible. We encounter data in all variety of fantastic and horrifying formats, but raw data files generally fall into one of three buckets:

Tabular

When data come in tabular files (.csv, .tsv, .xlsx, etc.) cleaning is generally a straightforward matter of standardizing entries to be consistent with our codebook through pivoting, recoding, and merging in geographic identifiers like FIPS codes.

Born-digital documents

Just as, if not more, common than tabular files are documents (.pdf) created on a computer (i.e. they are not scans of a physical document). Some states, like Kentucky, report election results in documents with the exact same format across counties (often PDF exports of spreadsheets). We parse these documents with tools like pdfplumber that use optical character recognition (OCR) to identify and extract their contents. This is usually straightforward for born-digital documents (angled text and complicated tables still prove to be tricky) but still requires tailoring an extraction script for a given file or file format. LLM-based coding assistants like GitHub Copilot, Claude Code, and GPT Codex can generate scripts to extract and wrangle data from these documents with limited prompting.

Digitized documents

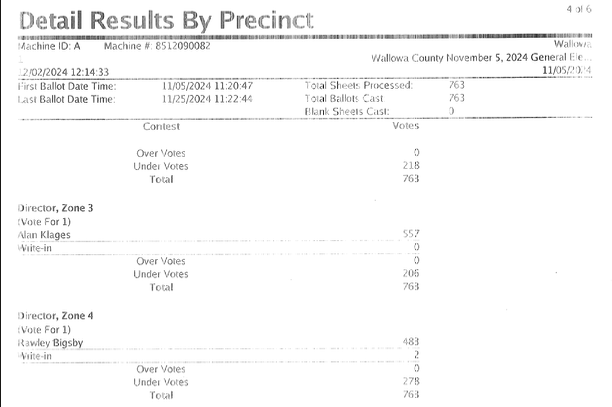

Scans of physical documents are the bane of our existence in this process. In most cases, these do not already have an OCR layer (i.e. you cannot search for text within them) and cannot have one easily applied due to differing page orientations, poor resolution, artifacting, or being cut off—see, for example, the case from Wallowa County, OR shown below. For most of the project’s history, such cases required manual extraction, which is both extremely time consuming and prone to errors—just about anyone’s eyes will glaze over as they try to transcribe hundreds of pages that look like the example. While we still resort to manual extraction when absolutely necessary, AI tools considerably reduce the need.

When it comes to creating a simple to moderately complex parsing script for born-digital documents, we haven’t found one frontier model to perform considerably better than another. However, for the more difficult cases of digitized documents like Wallowa County, we often had the most success using Gemini 3. It shouldn’t come as much of a surprise that Google, who has digitized tens of millions of books in the process of creating Google Books, has the comparative advantage. (Don’t take our word for it, check out how historians have been using it for texts even more indecipherable than our own.) That said, anecdotally, OpenAI and Anthropic seem to be improving their models’ abilities to process digitized documents.

An LLM excursion

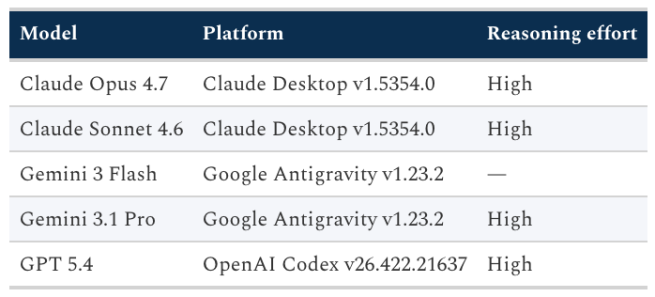

As an exercise in understanding the capabilities of AI for our data extraction tasks, I fed the following prompt to the frontier models in the table above:

Wallowa.pdf contains precinct-level election returns for the 2024 general election in Wallowa County, Oregon. Extract the election returns from the file and wrangle them to create a single CSV file called wallowa.csv with the variables detailed in README.md. You may refer to Oregon's precinct-level returns from 2022 which can be found in or22.csv to better understand how to format the data. When you are done, a single Python script, wallowa.py, should be able to create wallowa.csv. Write a concise 3-4 sentence report detailing the approach used to accomplish this and save it to report.txt.

I then audited the approach and output of each model, using the cleaned and quality assured data we wrangled for Wallow County as a reference. All models were prompted from within their native desktop app for macOS (Google Antigravity, Codex, and Claude for Desktop) from an account with the base paid plan. Of course, how the models performed with a minimal one-shot prompt from one user is not a robust test of overall model performance for OCR tasks—a key part of using these models effectively is acting as a sort of manager, checking in and nudging them in the right direction as they work. However, this exercise does give a sense of their varied approaches and limitations. For a similar and slightly more thorough exercise, see this excellent writeup from OpenElections.

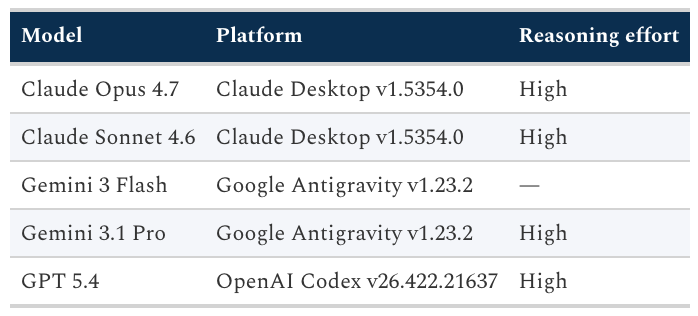

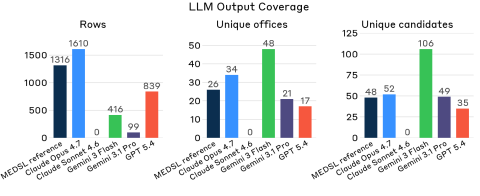

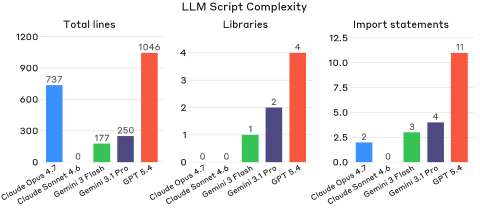

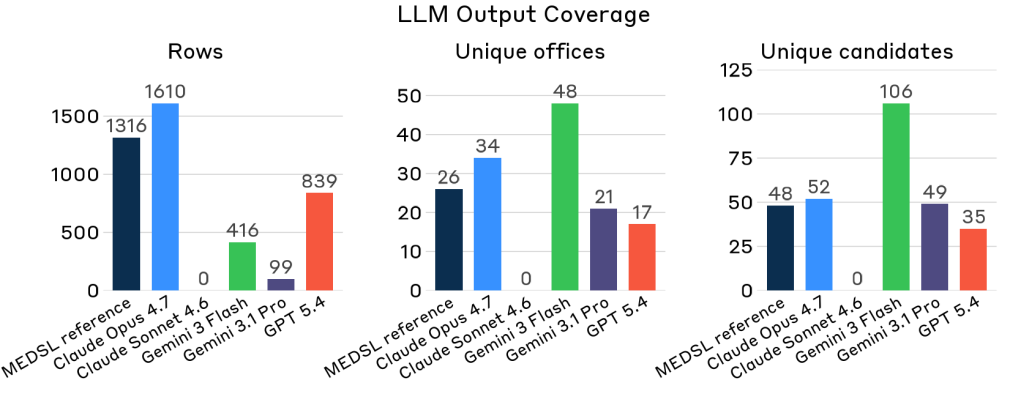

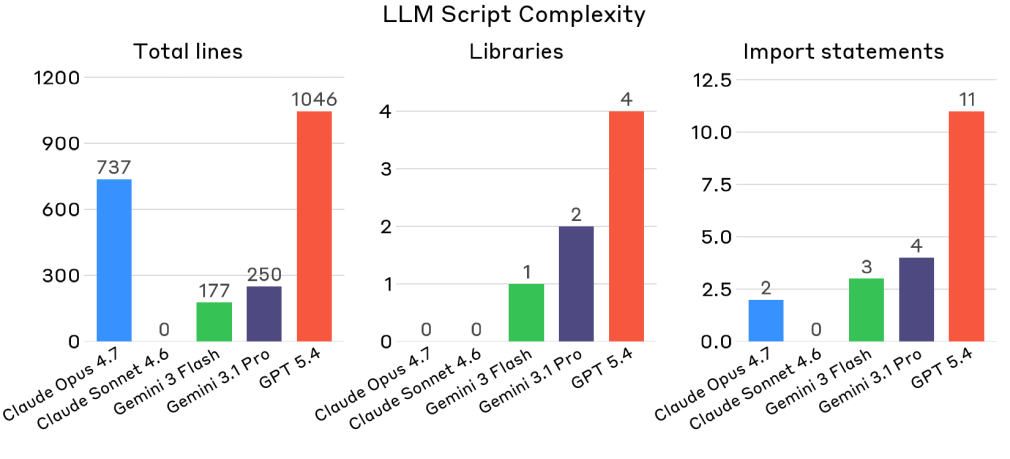

The figures above visualize key characteristics of the CSV file and Python script produced by each model. One thing is immediately clear: Anthropic takes the cake for having both the best and worst performing models for the task. Claude Opus produced an output with more rows than any other model and a number of rows closest to the reference data. It also produced a similar number of unique offices and candidates, overshooting the reference data only slightly–a result of some additional statistical aggregations present in the raw data being extracted but which we drop when producing the reference data. Claude Sonnet, on the other hand, fell flat on its face, ultimately producing no CSV file or script at all.

Let’s briefly go through the experience of prompting each of these models.

Gemini Flash

Staying true to its name, this model was by far the fastest to claim it finished the task, but that speed came at the cost of both quality and cleanliness, outputting only 416 rows, with office and candidate counts inflated well beyond what the full election actually contains. Rather than normalizing OCR noise into canonical strings, the script collected every garbled variant as its own record. The approach was sensible enough—run Tesseract over all 65 pages, concatenate the output, parse with regex—but the normalization logic wasn't up to the task of the messy scans.

Gemini 3.1 Pro

In contrast to Gemini Flash, this model took its sweet time (and credits), spending 10 minutes coming up with an implementation plan, asking for approval, and then burning through the rest of its quota writing 16 Python scripts without finishing the task. It ultimately produced just 99 rows—the least complete output of any model that finished—despite generating a more ambitious test-driven scaffold than any of the others. Though its 49 unique candidate strings nearly match the reference's 48, few of them are actual names. Instead, they’re OCR noise that the incomplete parser never got the chance to clean up.

GPT 5.4

This model tried to veer off in a different direction entirely, deciding the Wallowa PDF was so difficult that it was better off searching the web for a cleaner data source. After intervening to redirect it back to the PDF, it spent about an hour constructing a solid pipeline: render the pages, run RapidOCR, group bounding boxes into lines, match offices against a list of contest specs, and when the text is too garbled to parse a number, fall back to cropping the vote column and classifying individual digit glyphs with a k-NN model trained on the same document. Yet the resulting 839 rows, roughly 64% of the reference, show the limits of how far even a sophisticated pipeline can get on a scan of this quality.

Claude Sonnet 4.6

Far more surprising than the failure of Gemini 3.1 Pro was Sonnet’s poor showing. The first attempt to complete the task yielded only an error

API Error: Claude's response exceeded the 32000 output token maximum. To configure this behavior, set the CLAUDE_CODE_MAX_OUTPUT_TOKENS environment variable.

The second time around was met with a different error:

API Error: An image in the conversation exceeds the dimension limit for many-image requests (2000px). Start a new session with fewer images.

Even after modifying the prompt to explicitly warn against running into these errors, the model took well over an hour to produce a couple of intermediate JSON files with extracted text and bounding boxes. While doing so, on two occasions, the model found the reference dataset on the machine and tried to cheat, even after being told explicitly not to read any files outside the workspace. Not quite a sandwich in the park moment, but not exactly reassuring.

Claude Opus 4.7

Opus hit the same wall as Sonnet initially, throwing an API error after working for several minutes, though it had already begun generating page renders and OCR text files before the session died. After starting fresh with a prompt that warned the model about the token and image limits, it took a fundamentally different approach than any of the others: rather than writing a script to run OCR at execution time, Opus read the PDF pages visually while writing the script, transcribing every vote figure directly into Python data structures. The result is by far the most complete output—1,610 rows (including statistical aggregations present in the raw data but which we drop in our reference data) covering all 12 precincts and every office present in the reference data—with a built-in self-check that verifies each contest's vote components sum to the reported total. Opus’ success comes as little surprise given its reputation at the time of writing of being the most sophisticated consumer model around.

Ensure data quality

Once we’ve collected and extracted the data for a state, it enters our quality assurance (QA) process comprising three phases. In the first phase, the wrangler—the person performing extraction for the state—assigns a member of the research team as the first reviewer. This reviewer performs a series of validation checks on the data and provides detailed feedback about issues with the data. The wrangler then applies fixes to address reviewer feedback before kicking the data back to the reviewer for another round of QA; rinse and repeat until the reviewer no longer sees any issues. A second reviewer then performs validation checks independently. If they find further issues, the state goes back to the first phase, otherwise they give their stamp of approval. At this point, we make the data available in our 2024 election data repository so the public can use it and report issues they encounter.

After all states pass two rounds of QA, we enter the final phase in which the research director addresses issues reported by the public and performs a final round of validation checks. Sometimes, as was the case for Indiana this time around, additional data has been released since our initial collection, necessitating further wrangling and rounds of QA.

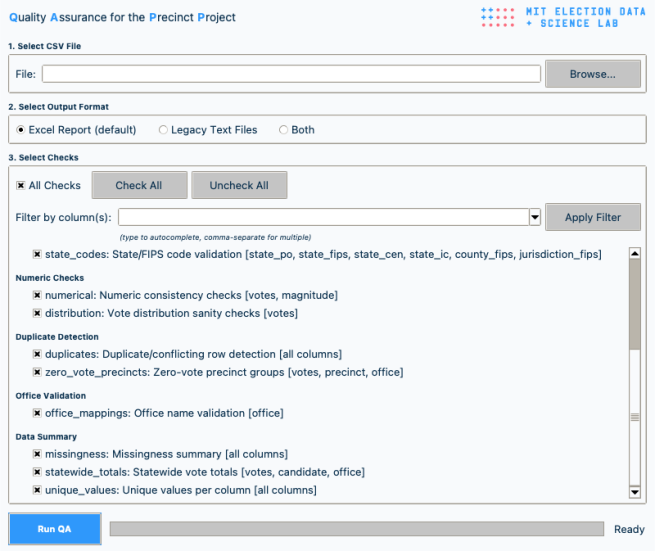

You may be wondering what our validation checks actually check. Our workhorse for automated checks is an internal tool called QAPP (Quality Assurance for the Precinct Project) that expands on a QA engine built by a previous research associate Marcos Zárate and the previous research director, Samuel Baltz. Rather than having reviewers manually hunt common errors, it runs around 200 checks across 15 categories and produces a single report indicating what may need closer inspection. Some of these checks are basic: are all required columns present, are values in the expected format, and are state and county codes consistent with official reference files? Others look for problems that can be difficult to spot, such as duplicate or conflicting rows, blank candidate names, non-numeric or negative vote totals, suspicious zero-vote precincts, and inconsistent district labels.

QAPP also helps us check whether the dataset makes sense as a whole, not just row by row. It summarizes missing or unusual values, flags unusual vote distributions, and, perhaps most importantly, it aggregates precinct results up to statewide totals for major offices so we can compare them against state-level results where available. The engine does not modify the data on its own–it only directs attention to the places where errors are most likely and allows the reviewer to document issues for the wrangler to fix.

As with data collection and wrangling, we also incorporate AI tools into our QA process. Agents can use the output of QAPP to propose systematic or surgical fixes for data errors and apply them with human approval. This is especially helpful when fixes require careful string pattern matching and manipulation, e.g. reformatting or standardizing text entries like office or candidate names. In the days of old, this required a human to write a script that used regular expressions to match on specific patterns in the text.

For example, a regular expression we used to identify statewide ballot measures in Oregon looks like this:

r"^(?:QUESTION|STATE MEASURE|MEASURE)?\s*(?:[0-9]{1,2}-)?(11[5-9])\b(.*)$"

Tuning this expression to work as intended would take even an experienced programmer several minutes and a few Stack Overflow searches to get it right; an AI coding assistant can construct it almost instantaneously.

What lies ahead

Our effort to produce a nationwide dataset of precinct-level returns continues to evolve as we refine our data pipeline, learn from previous releases, and incorporate new technologies. The 2024 dataset took over 500 days from when polls closed on November 5, 2024 to be ready for release. However, as the work required to produce the data can increasingly be automated, be it collecting, extracting, or quality assuring the data, we hope to considerably reduce the time between when states report official results and when we release our dataset.

But this is not without challenges. For one, advances in AI mean a rapidly changing understanding of how one can effectively use these tools—whether it’s optimizing prompts, orchestrating subagents, or knowing when to use skills. Consensus around any of these is elusive, changing day by day and model to model. A downstream consequence, exacerbated by the stochastic nature of LLMs, is the potential to create data (and ultimately research) that cannot be reproduced.

To date, most social science work probing this focuses on the problem posed by non-determinism in text annotation tasks. But even using LLMs to collect and wrangle data, as we do, has the potential to cause reproducibility problems if care isn’t taken. Suppose a human wrangler comes across a particularly awful digitized document containing a county’s data and asks Gemini to extract it. Gemini spits out a CSV file with cleaned data that looks reasonable enough and seems to accurately reflect the raw data, so much so that it passes through QA and is published with these missing races. When a user eventually encounters and (hopefully) reports the error, it will not be possible to determine what went wrong with the extraction—–the document will have to be entirely reparsed and QAed, consuming time and model credits that could have been saved if the wrangler had simply prompted Gemini to write a script that produces the cleaned data from the raw.

A broader challenge presented by AI is the redistribution of labor instigated by automation of entry-level tasks. The oft-repeated example is coding assistants replacing the junior developer who eventually becomes the senior developer, but researchers face a similar dilemma. Much academic research has traditionally rested on the work of undergraduate and graduate research assistants performing repetitive data entry, cleaning, and classification tasks—–the very tasks AI models excel at. While not the most thought-provoking or interesting work, there is an argument to be made that getting one’s hands dirty with data in this way is essential to developing the skills and expertise needed to succeed at high level tasks. But not too long ago one may have said the same thing to a junior developer about the necessity of learning assembly language to effectively program in C++.

In the context of wrangling election data, there is validity to both perspectives. It may very well be the case that students can now gain more practical experience learning how to effectively automate data tasks rather than doing them manually or with human-written code. Yet a deep understanding of the structure and limitations of the underlying data is still indispensable when conducting rigorous research.